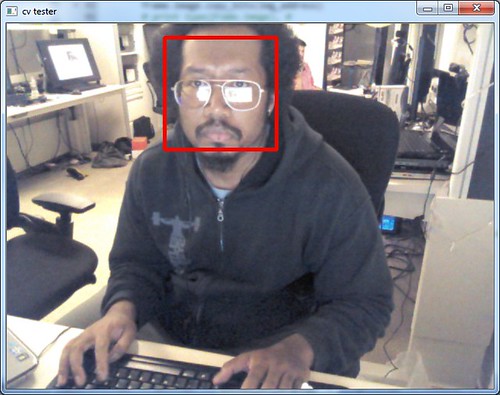

OpenCV...one of the few things that doesn't break when it sees my ugly mug...

That said, the thing that caught my eye most at Maker Faire was SimpleCV, which is a wrapper around OpenCV (ya think) and a few other super useful libraries. They had a few really cool demos running, but it was more being able to look at the code and see how simple it was that really got my attention. Not that OpenCV by itself doesn't have a ton of functionality already, SimpleCV just has a few more convenience functions, not to mention some slick blob finding stuff (among other things). The big (and very specific) hurdle I ran into is that SimpleCV uses libfreenect, specifically the python bindings, for kinect access, and from everything I read online, getting the freenect python bindings to play nice with Windows is a bit of a chore. I must say, in the last week or so, I've been amazed at how many tasks i've undertaken that turned out to be...not so simple or well documented. That means i'm either truly blazing the trail or I'm doing it horribly wrong and missing the obvious solution. Let's assume the latter.

Anyway, so after a day of trying to build python freenect and only being somewhat succesful, i decided to see if I could get SimpleCV and pykinect to play nice together. Thanks to some hard work done by another Codeplex-er who goes by the handle bunkus, i got pykinect and the OpenCV python bindings talking. From there it was a pretty simple step to get pykinect and SimpleCV talking, since SimpleCV talks straight through OpenCV. Well, it would've been if i'd remembered to install PIL...As much time as i'd spend digging through the SimpleCV ImageClass source, you'd think the dependencies would've been burned into my brain.

But you know, at the end of the day, I've got pykinect streaming into SimpleCV, which is a good thing. There's been a ton of interesting work done in open source kinect space, but having had my fill of trying to deal with OpenNI, i'm more than happy to use a supported, iterated on SDK with the most current features. Who knows what I might want to do next? So here's some code, yes i know, it's horrible and un-pythonic and there are globals all over the place, but it works...cleanup is next. Actually, next I'm going to work my way through the SimpleCV book and see what happens. This + multiple kinects? If performance doesn't murder me, could be pretty cool...

import array

import thread

import cv

import SimpleCV

import pykinect.nui

def frame_ready(frame):

global disp, screen_lock, img_address, img_bytes, cv_img, cv_img_3, alpha_img

with screen_lock:

frame.image.copy_bits(img_address)

cv.SetData(cv_img, img_bytes.tostring())

cv.MixChannels([cv_img],[cv_img_3,alpha_img], [(0,0),(1,1),(2,2),(3,3)])

scv_img = SimpleCV.Image(cv_img_3)

scv_img.save(disp)

if __name__=='__main__':

screen_lock = thread.allocate()

cv_img = cv.CreateImage((640,480), cv.IPL_DEPTH_8U,4)

cv_img_3 = cv.CreateImage((640,480), cv.IPL_DEPTH_8U,3)

alpha_img = cv.CreateImage((640,480), cv.IPL_DEPTH_8U,1)

img_bytes = array.array('c','0'*640*480*4)

img_address = img_bytes.buffer_info()[0]

disp = SimpleCV.Display()

kinect = pykinect.nui.Runtime()

kinect.video_frame_ready += frame_ready

kinect.video_stream.open(pykinect.nui.ImageStreamType.Video, 2, pykinect.nui.ImageResolution.Resolution640x480, pykinect.nui.ImageType.Color)

Hmm, i see SimpleCV.Image also takes numpy arrays as a parameter...might be able to bypass the external OpenCV calls entirely. Good weekend project.

ReplyDelete